Types tell you what an agent is for. Components tell you what it's made of.

The first post of this series named the five types (Assistant, Analyst, Tasker, Orchestrator, Guardian) and the boundary rules that keep each one from drifting into another's lane. Those types are roles. They describe what a given agent does inside a workflow. They don't describe what the agent is actually built from, and without that second layer the types stay abstract. You can name an Analyst without knowing what the Analyst is made of, and then you design the Analyst wrong because you never asked the anatomy question.

This write up is the anatomy question.

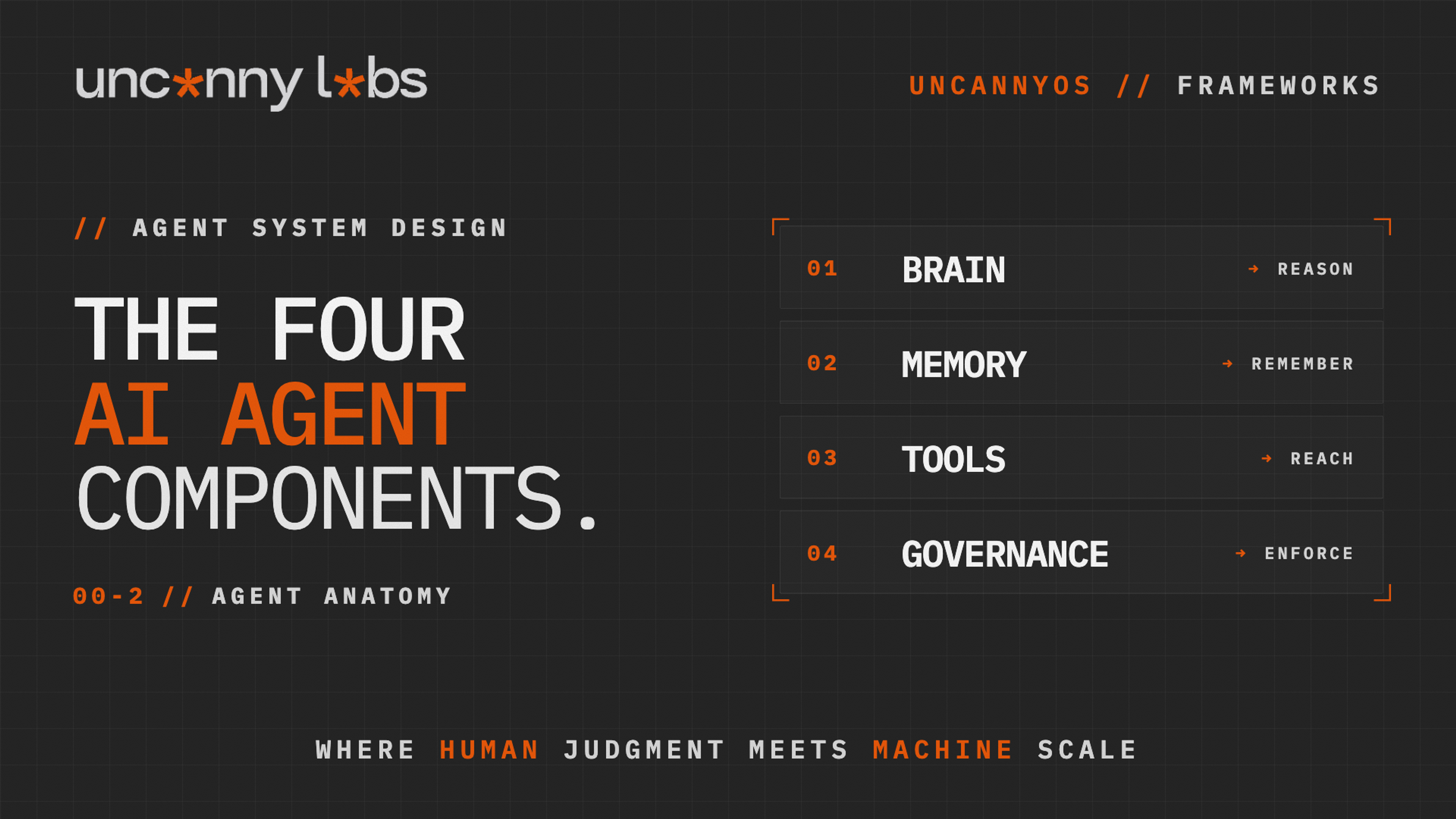

Every AI agent, regardless of its type, is built from four components. A Brain that reasons. A Memory that remembers. Tools that reach into the outside world. A Governance layer that enforces limits and handles escalation. Missing any one of the four creates a gap. The gap is not always obvious at design time, but it is always obvious at the post-mortem.

The four components are the second primitive of the Agent System Design we use at Uncanny Labs to build every workflow we ship, inside Uncanny Works (our productized service-as-a-software AI Workforces) and across every client engagement. Once you know what roles exist (Part 1) and what each role is made of (this article), the next question is how roles compose. That's Part 3. Then how they earn trust, which is Part 4. Then a full walkthrough of how it all fits together in Part 5.

What follows is the anatomy.

---

1. The Brain — The Core Reasoning Engine

The Brain is the component that reasons. It is the model plus the system prompt plus the configuration that tells the model how to think. When people say "the agent thinks" or "the agent decides," the thinking and deciding is happening inside the Brain, which is a language model running against a prompt designed to make it behave in a particular way.

Four design choices live in the Brain. First, the model itself: a capability tier matched to the complexity of the task. Second, the system prompt: the role definition, the tone, the operational rules, the examples, the escalation conditions. Third, the configuration: temperature, output format, reasoning depth, whether chain-of-thought is required, whether structured output is enforced. And fourth, the implicit choice sitting underneath all of the above: what the model has learned from its training data, which shapes how the Brain reasons before the system prompt ever touches it.

The design principle — the Brain must match the task's complexity, not the system's ambition. An Assistant summarizing meeting notes does not need the top-tier frontier model; a cheaper model with a tight prompt produces the same output at a tenth of the cost. An Analyst scoring a complex risk profile against twelve dimensions needs a Brain that can hold all twelve in working memory at once; an underpowered model will either fake it or silently drop dimensions from the score. The cost of an overkill Brain is tokens. The cost of an underkill Brain is quiet errors that surface later and more expensively.

The most commonly overlooked Brain decision is the implicit one. Every model encodes the biases of its training data. A model trained primarily on Western English-language internet text embeds Western ethical intuitions, Western narrative conventions, and Western business norms by default, inconsistently, and invisibly. LLMs learn patterns of justification, blame, care, and obligation from the training corpus, not from your organization's values. The Brain you chose is reasoning according to priors you did not set. The design response is to run an implicit ethics audit when you select a model for a sensitive role, and to document the assumptions the Brain is working from so they can be checked during deployment.

The anti-pattern to avoid is the one-Brain-fits-all approach: using the same model and the same generic prompt across every agent type in the system. Different types have different reasoning loads. An Assistant that prepares material has different demands from an Analyst that interprets it from an Orchestrator that decomposes a task. Collapsing all three into one model with one prompt flattens the specialization the types were supposed to provide.

---

2. The Memory — Context and State

The Memory is what the agent knows and remembers. It has two layers. Working memory is the context the agent holds during a single run. Persistent memory is the state the agent carries between runs. Both need to be designed, not assumed.

Working memory lives in the context window. It contains the system prompt, the user's request, the intermediate reasoning, any retrieved documents, and the recent conversation history. Every token in the context window costs money and, more importantly, dilutes the Brain's attention. A context that is too sparse starves the Brain of what it needs. A context that is too full buries the relevant material under noise and the Brain starts to ignore or misweight it. The design question is always the same: what does this agent need to see right now, in this run, to do its job, and how do we get exactly that into the context without adding the rest?

Persistent memory is harder. Some agents are stateless by design. A Tasker that sends a templated email does not need to remember what it sent last week. Some agents must be stateful. An Orchestrator coordinating a multi-week workflow needs to track where the work is, what's been done, what's pending, what's escalated. When persistent memory is required, it lives in an external store (a database, a vector index, a RAG layer, a structured memory file), and the agent reads from and writes to that store through explicit tool calls. The memory is not in the Brain. The memory is in the system, and the Brain has a tool that reads from it.

The design principle — Memory is either explicit and bounded, or it is a liability. Every piece of persistent memory the agent accesses should have a defined source, a defined retrieval logic, and a defined scope. An agent that pulls "anything relevant" from an unbounded memory store will eventually pull the wrong thing. An agent that pulls from an explicit, scoped, versioned memory store will behave consistently because its inputs are consistent.

A practical design rule worth naming is the distinction between knowledge that belongs in the system prompt and knowledge that belongs in a retrieval layer. Smaller corpuses and behavior-shaping knowledge go directly into the prompt. Larger corpuses the agent needs to search at run time go into RAG. The choice is not cosmetic. Prompt-embedded knowledge shapes how the agent thinks; RAG-retrieved knowledge shapes what the agent knows. Putting behavior-shaping rules in RAG is why the agent sometimes follows them and sometimes doesn't. The rule wasn't in its prompt on the runs where it failed. Putting reference data in the prompt is why the context window fills up, attention degrades, and the agent slows down. Match the memory type to the memory's job.

A second failure mode is source contradiction. When the agent's memory pulls from two sources that disagree with each other, performance degrades in ways that are hard to diagnose. One document in the RAG store says the policy is X. Another says it is Y. The agent gets both, reasons over both, and produces an output that splits the difference in a way nobody authored. The fix is to treat every memory source as something that has to be reconciled upstream of the agent. If two sources can disagree, the system needs a Guardian or a policy layer that enforces which source wins. The agent should never see both unreconciled.

The most common Memory failure in production is context poisoning, an agent whose memory has silently filled with outdated, irrelevant, or subtly wrong material that now biases every run. A Writer whose voice calibration memory still references a style guide from two versions ago will produce drafts that read fine but are slightly off-brand. The team notices the drift eventually. The post-mortem finds that the Memory was never pruned or re-validated. The fix is always the same: version the memory, schedule validation, and separate the memory the agent writes from the memory the agent reads.

The second common failure is the opposite, an agent with no memory at all, running every interaction as if it were the first. Groundhog-day agents frustrate users and waste tokens re-establishing context every run. The fix is to give them the minimum viable persistent memory the task needs, and no more.

---

3. The Tools — Actions and Integrations

The Tools are what the agent can reach. They are the APIs, the databases, the calendars, the email gateways, the document stores, the web search, the code interpreters, every external system the agent is allowed to interact with. The Brain decides. The Tools do.

A well-designed tool is three things at once. It is a function: the agent calls it with structured arguments and gets a structured response. It is a description: the agent reads a definition that tells it what the tool does, when to use it, and what its limitations are. And it is a scope: the permissions it has, the rate limits it respects, the audit trail it generates. Skip any of these three and the tool will eventually do something the system did not intend.

The design principle — every tool needs a purpose, a scope, and an audit trail. Purpose is the one thing the tool does, stated cleanly enough that the Brain knows exactly when to reach for it. Scope is the boundary of what the tool can touch: which records, which channels, which accounts, which time windows, under what conditions. Audit trail is the record of every call the tool made, what it returned, and what the agent did with the result. An unaudited tool call is an action the system cannot reconstruct, and unreconstructable actions are how small problems become large incidents.

Tool description quality matters more than most teams realize. The Brain reads the tool's description to decide whether to use it, and a vague description produces vague usage. A tool described as "send an email" will get called whenever the agent thinks email is relevant, which is more often than you want. A tool described as "send a templated status update email from the support channel to a customer who has an active ticket, one call per ticket per day, does not support freeform body text" will get called exactly when it is appropriate. The description is the Brain's user manual. Write it like documentation, not like a function signature.

Permission scoping is where production systems most commonly fail. A tool that has write access to the entire CRM when it only needs to update one field is a tool that will eventually update the wrong field. Least privilege is not a security feature; it is a design discipline. Every tool call should do the least it can do to accomplish its purpose, and the tool's scope should make anything broader impossible, not just unlikely.

The most common Tools anti-pattern is sprawl. An agent that starts with five tools and accumulates fifteen over six months will use its Brain cycles deciding which of the fifteen to call. Decision paralysis in an agent is expensive: it burns tokens on meta-reasoning and degrades the quality of the downstream work. The fix is periodic tool audit. Which tools has the agent actually called in the last thirty days? Which have not been called? The uncalled ones are either unused (remove them) or miscalled (the Brain isn't finding them, which is a description problem). A focused toolkit of five well-scoped, well-described tools outperforms a sprawling toolkit of fifteen almost every time.

---

4. The Governance — Guardrails and Escalation

The Governance component is what stops the agent from doing the wrong thing, notices when the agent is drifting, and hands control back to a human when the situation exceeds the agent's mandate. It is the safety layer built into the agent itself, distinct from the Guardian agent in the broader system though working in coordination with it.

Governance operates at two levels. Prompt-level governance lives inside the system prompt: explicit instructions telling the Brain what it will not do, what triggers escalation, what falls outside its mandate. Server-level governance lives outside the Brain, in the deterministic code that wraps the agent and sits between its output and the outside world: schema validators checking output format, permission systems refusing tool calls outside the agent's scope, routing logic sending low-confidence outputs to human review, rate limiters, redaction layers, audit loggers. The LLM has no influence over any of that code, which is what makes it enforcement rather than suggestion. Both are required. Neither is sufficient alone.

Prompt-level governance is necessary because the Brain needs to know its own limits. An Assistant told "never send emails, only draft them" internalizes that rule and behaves accordingly. A Tasker told "only update the CRM if the source data includes a valid customer ID" shapes its behavior around the rule. The rule lives in the prompt, the Brain reads the prompt on every run, the rule holds.

The problem with prompt-level governance alone is that it degrades. Under load, with unusual inputs, with long contexts, the Brain starts to interpret rules instead of following them. "Never send emails" becomes "never send emails unless the user seems to really want one." "Only update the CRM if there is a valid customer ID" becomes "update the CRM if the ID looks mostly valid." The rule was in the prompt. The prompt no longer enforces it. This is why server-level governance has to exist alongside it: deterministic code that validates the output before it ships, that refuses to execute an action that violates scope, that logs and blocks and escalates without routing through the Brain.

The design principle — governance must live outside the prompt to be governance at all. If the only place the rule exists is inside the system prompt, the rule is a suggestion. If the rule also exists as a server-level check that the Brain cannot talk its way around, the rule is enforcement.

Escalation triggers are the other half of governance. Every agent should have a defined set of conditions under which it stops and calls for help. Unusual input shape. Output below confidence threshold. Action about to exceed scope. Repeated failure on a single task. The triggers are written into the agent's design, coded into its loop, and audited in production. An agent without escalation triggers will eventually face a case it cannot handle and will either fail silently or produce wrong work. An agent with well-designed triggers fails loudly, cleanly, and in a way the system can route.

The most common Governance failure is treating it as an afterthought. Teams design the Brain, the Memory, and the Tools, ship the agent, and add governance later when incidents force the issue. The result is layered retrofitting: a refusal rule here, a check there, an escalation path bolted on in response to a specific failure, and the governance layer is full of holes that the next incident will discover. The fix is to treat Governance as a first-class component of the agent, designed alongside the Brain and the Tools, not grafted on after deployment.

A final point on Governance worth naming separately. There is a distinction the rest of the agent literature often blurs: organizational ethics and governance do not live inside individual agents. They are distributed across the organization, enacted through workflow design, oversight structures, escalation paths, and accountability mechanisms. The Governance component inside an agent is the expression of those organizational choices at the agent level, not a substitute for them. An agent whose Governance is tight but whose organization has no governance architecture is an agent doing individual compliance inside a system that doesn't know what compliance means. Governance inside an agent should reflect a policy layer that exists outside it. When it doesn't, the Governance component is operating alone, and operating alone is not what Governance is for.

---

How the Four Components Interact

The components are not a checklist. They are a system inside each agent. What makes an agent work is the interaction between the four.

The Brain reasons over the Memory to decide which Tools to call under which Governance constraints. The Tools' outputs flow back into the Memory, which updates what the Brain knows for the next reasoning step. The Governance layer watches the whole cycle and intervenes when something exceeds its mandate. Every component is talking to every other component on every run. Weakness in one degrades all four.

A powerful Brain paired with a weak Memory produces an agent that reasons brilliantly about the wrong context. A strong Memory with no Tools produces an agent that knows what to do but can't do it. A well-scoped toolkit with no Governance produces an agent that efficiently does things it should not. A tight Governance layer over a weak Brain produces an agent that correctly refuses to do the work it was hired for.

This is why the four components have to be designed together. A deployment that ships strong on two components and weak on two is not seventy-five percent ready; it is zero percent ready, because the weakest component determines the system's failure mode. The diagnostic when an agent underperforms in production is almost always to find the weakest of its four components and rebuild that one. The rest of the agent is usually fine.

---

The Diagnostic — Which Component Is Missing?

When an agent is failing in production, the failure mode tells you which component is the problem.

If the agent produces reasonable outputs on simple cases but collapses on complex ones, the problem is the Brain. The model is under-capable for the task's cognitive load. Upgrade the model or split the task into simpler sub-tasks and wire them into a workflow archetype instead. (More in Part 3 - Five Workflow Archetypes)

If the agent gets the same simple things wrong over and over, the problem is Memory. Either the context it needs is not in its working memory, or its persistent memory is poisoned. Audit what the agent actually sees on each run and what has accumulated in its store over time.

If the agent understands the task but executes the wrong action, the problem is Tools. Either the tool's description is misleading the Brain into calling it, or the tool has broader scope than the task required, or the agent has too many tools and is picking the wrong one. Audit tool usage and tighten scope.

If the agent does things it should never do, or does not escalate when it should, the problem is Governance. The rules it is supposed to follow are only in the prompt. Add server-level enforcement and explicit escalation triggers.

Most agent failures are one-component failures. The fix is usually narrow. Teams that spend months rebuilding entire agents when a Memory audit or a tool description rewrite would have solved the problem are mistaking the component for the agent.

---

FAQ

What are the four components of an AI agent?

Brain (the model plus system prompt plus configuration that does the reasoning), Memory (the context and state the agent holds and remembers), Tools (the external APIs and actions the agent can reach), and Governance (the prompt-level and server-level rules that constrain the agent and define its escalation paths). Every agent has all four. Missing any one creates a gap.

Do I need all four components for a simple agent?

Yes, even when "component" is small. A simple Assistant has a Brain (the model and the prompt), a Memory (at minimum the current conversation), Tools (at minimum, the ability to return an answer), and Governance (at minimum, the refusal conditions and any scope rules). The components scale with the agent's job. They do not disappear when the agent is simple.

What's the difference between the Governance component and a Guardian agent?

The Governance component lives inside the individual agent. It's the layer that shapes what that agent will and won't do. A Guardian agent is a separate agent in the broader workflow whose job is to monitor other agents' outputs against policy. Both exist because they solve different problems. The Governance component keeps one agent honest. The Guardian keeps the system honest across many agents.

Can Memory be persistent across sessions?

Yes, when the task requires it, and only when the task requires it. Stateless agents don't need persistent memory, and adding it unnecessarily creates drift risk. Stateful agents need explicit, versioned, scoped persistent memory with validation schedules. The default should be stateless with explicit justification for adding persistence, not the other way around.

How do I know if my agent is weak in one component?

Match the failure mode to the component. Collapses on complexity means the Brain is under-capable. Gets simple things wrong repeatedly means the Memory is wrong or missing. Executes the wrong action means the Tools are miscalibrated or over-scoped. Does things it shouldn't or fails to escalate means Governance is thin. Each failure mode has a fingerprint.

Is the Brain just the choice of model?

No. The Brain is the model plus the system prompt plus the configuration plus the training-data priors the model brings with it. Changing the model without changing the prompt changes the Brain. Changing the prompt without changing the model also changes the Brain. Both matter, and neither is sufficient alone.

---

Four components. Every agent has all four. The design question at this layer is not whether the components are present — they always are — but how each is built and how cleanly they interact.

Once the inside of each agent is sound, the next question is how agents compose into workflows. That's the archetype layer. The Five Workflow Archetypes covers the composition patterns — Prompt Chaining, Routing, Parallelization, Orchestrator-Workers, Evaluator-Optimizer — and the boundary rules that keep each composition from degrading. The Progressive Autonomy Ladder covers how each agent inside a workflow earns its way from Assist to Own. The Agent × Archetype Matrix is the capstone — a full walkthrough of Uncanny Works where types, components, archetypes, and autonomy all show up inside one running production system. And if you skipped it, the Five Agent Types post is the first primitive of the same stack.

— Arthur Simonian Founder, Uncanny Labs · AI Workforce Agency