Agents don't compose themselves.

Teams that ship agents tend to discover this the hard way. The first agent works. The second agent works. Put them in the same workflow and the whole thing gets slower, weirder, and harder to audit than either piece alone. The handoff logic lives in three places and nowhere. The failure modes multiply. The "agent team" becomes a fragile script held together by the specific person who happened to wire it up, and the moment that person rotates out, the system becomes unowned.

The fix is to pick a composition pattern that matches what the workflow actually needs.

The first post of this series laid out the five agent types — Assistant, Analyst, Tasker, Orchestrator, Guardian — and the boundary rules that keep them from collapsing into each other. The second post went inside each one and named the four components every agent is built from — Brain, Memory, Tools, Governance. Those two primitives are about individual agents. This write up is about what happens when you put them in a system.

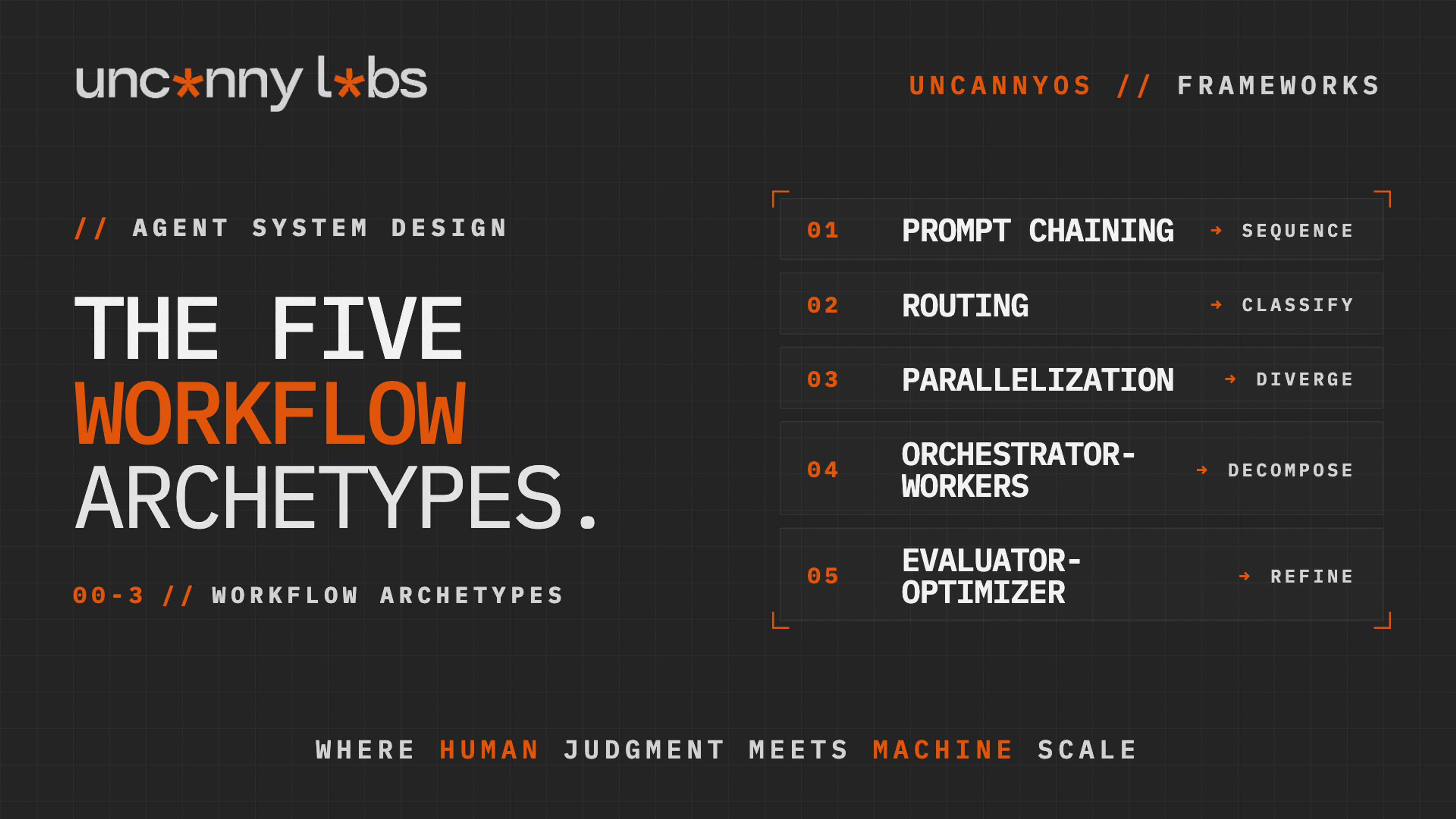

There are five archetypes, and they aren't interchangeable. Each solves a different shape of workflow problem, with its own boundary rule that has to hold for the pattern to work.

The archetypes below are the third primitive of UncannyOS — the design methodology we use at Uncanny Labs to build, govern, and scale agent systems. They are the compositional patterns behind every workflow we ship inside Uncanny Works (our productized service-as-a-software AI Workforces) and across every client engagement. They draw from the broader agentic AI literature and from the workflows we've shipped at Uncanny Labs, formalized into a design vocabulary that survives contact with reality. The five archetypes are Prompt Chaining, Routing, Parallelization, Orchestrator-Workers, and Evaluator-Optimizer. What follows is the taxonomy in full.

Before the archetypes themselves, a note on why most teams pick the wrong one. Four cognitive blockers show up consistently when designers select an archetype:

- Anchoring — reaching for a pattern that looks like the process the team already runs, instead of the pattern the task actually needs.

- Process thinking — optimizing the existing sequence of steps, when imagining a different sequence would produce the real win.

- Linear logic — swim-lane mental models that hide the parallelism the archetype could unlock.

- Success bias — sticking with the archetype that worked last time because it worked last time, even when the new task has a different shape.

The archetypes below are shaped by the workflow, not by the org chart. If the archetype you're drawn to is also the one that mirrors your current team structure, look twice.

---

1. Prompt Chaining — The Sequential Pipeline

Prompt Chaining is the simplest archetype and, for that reason, the one most teams default to without thinking about it. The structure is a chain of agents, one after the other, with a gate between each step. The first agent's output becomes the second agent's input. The second passes to the third. Somewhere along the chain, a gate — programmatic or human — checks whether the work is good enough to continue.

Use Prompt Chaining when the task decomposes into clear sequential steps and you want explicit control between them. A document extraction pipeline where one agent parses, a gate verifies completeness, and a second agent summarizes is a canonical case. An onboarding flow where an Assistant drafts a welcome, a Guardian checks tone, and a Tasker sends the email is another. The pattern is linear, traceable, and cheap to reason about. It is the pattern you reach for when the workflow has no meaningful parallelism and no branching.

The boundary rule: the gate must be explicit, not buried in a system prompt. The reason the rule holds is that gates written in natural language inside an LLM prompt degrade in ways that are invisible from the outside. The prompt says "only proceed if the extraction is complete." The model, under load, decides that "complete enough" is complete. The gate becomes a suggestion. You don't notice until a downstream failure traces back six steps and you realize the check was never enforcing anything.

The fix is to move the gate out of the prompt and into deterministic code. A field-completeness check. A schema validation. A threshold on a classifier score. An explicit human review step. Whatever the gate is, it has to be something the system can pass or fail on cleanly, with the logic living in the workflow engine and not in the model's good intentions. Anthropic's own write-up on this pattern emphasizes the same rule, for the same reason. Gates in prose are gates that drift.

The second common trap is chains that are too long. Each step in a chain multiplies the failure surface. A six-step chain where each step fails five percent of the time has a twenty-six percent end-to-end failure rate, and once a failure happens mid-chain the partial work is either wasted or has to be rescued by a retry layer the designer didn't build. If the chain is longer than four steps, the answer is almost always to replace part of it with Orchestrator-Workers.

---

2. Routing — Classification and Specialization

Routing adds a branch to the pipeline. A classifier agent reads the incoming work, decides what kind of work it is, and directs it to one of several specialist branches. Each branch is tuned for its category (different prompts, different tools, sometimes different models). The router itself produces no work product. Its entire job is classification.

Use Routing when the inputs are heterogeneous and benefit from specialist handling. Customer service triage is the textbook example: router reads the incoming ticket, classifies it as billing, technical, product, or general, and hands it to the right specialist agent. Content workflows use routing to direct pieces into the right production track by format. Support systems use routing to triage by urgency. Anywhere the work is not uniform and the specialists are sharper than a generalist, Routing is the right archetype.

The boundary rule — the router must enumerate every category explicitly and must have a defined default path. The reason the rule holds is that unclassified inputs in a routing system don't stop, they silently take the wrong path. A router that classifies seven categories will, on the first input that is genuinely none of those seven, pick whichever one is closest. The specialist that receives the input handles it using the wrong playbook, and the failure looks like the specialist being wrong, not like the router being incomplete.

The most dangerous version of this anti-pattern is the implicit "other" bucket — a specialist whose instructions are "handle anything the router couldn't place." That specialist becomes the garbage drawer of the system. Edge cases and failures accumulate there. The workflow is routing most of the volume correctly while quietly misrouting everything strange, and the strange cases are exactly the ones where a mistake is expensive.

The fix is two-fold. Enumerate categories with the business, not with the model. Add an explicit escalation path: a human review queue or a formal "unknown" category that fails loudly instead of quietly. A router that says "I don't know, ask a human" is a correctly-functioning router. A router that never says it is a router you can't trust.

Specialized branches can themselves use any archetype. A Routing + Parallelization pattern routes into branches that each run multiple analysts in parallel. A Routing + Evaluator-Optimizer sends high-stakes classifications into a revision loop. Routing is a front door. Each branch behind it is its own workflow, often running its own archetype.

---

3. Parallelization — Independent Perspectives, Merged Deterministically

Parallelization runs multiple agents on the same input, each from a different angle, and merges the results. The agents don't know about each other. They don't see each other's output. They run in parallel, produce independent takes on the same problem, and a downstream step combines them.

Use Parallelization when independent perspectives produce a better result than a single generalist pass. Take content review. One Analyst checks tone, one checks factuality, one checks structure. Each can specialize. None biases the next. The aggregator takes all three findings and produces a consolidated review. Risk analysis is the same shape. Financial, operational, and reputational risk each get a dedicated Analyst, with a rule-based aggregator pulling the scores together. Anywhere the value comes from diversity of perspective, Parallelization is the archetype.

The boundary rule — aggregation logic must be deterministic, not another LLM call. The reason the rule holds is that the entire benefit of parallelization comes from the independence of the perspectives. Each agent ran in isolation specifically so that the takes would not contaminate each other. When the aggregator is itself an LLM, reading all three outputs and "synthesizing" them, you've re-introduced the coupling you tried to avoid. The synthesizer's opinion on the first output colors its reading of the second. The outputs collapse into a blended consensus that is no longer three independent views. You spent the tokens to run three agents and got the cognitive output of one.

The fix is that the aggregator has to be code. That can be a rule like "if any Analyst flags high risk, escalate," a weighted average across the scores, a union of flagged issues, or a set intersection. Whatever the aggregation logic is, it has to be something that a deterministic function can execute without reading the outputs as prose. If the logic is genuinely complex enough to require reasoning, the archetype is wrong. Orchestrator-Workers fits that shape, with an explicit Analyst as the synthesizer and workers designed to hand off structured results.

The second common trap is treating Parallelization as a performance pattern. Three agents running in parallel cost three times what one agent costs, and when model latency is the bottleneck the wall clock barely moves. Parallelization exists to improve correctness. Three Analysts working the same input from different angles produce a better result than any one of them alone. That's when to pick it. If your motivation is that "parallel sounds faster," you've misread the archetype.

---

4. Orchestrator-Workers — Decompose, Dispatch, Synthesize

Orchestrator-Workers is the archetype for complex work where the decomposition isn't known in advance. A central orchestrator reads the input, decides how to break it down, dispatches worker agents to handle each sub-task in parallel, and a synthesizer merges the results into a coherent output. Unlike Parallelization, the orchestrator decides the sub-tasks dynamically based on the input it receives.

Use Orchestrator-Workers when the task is complex and the steps depend on interpreting the input. A research brief where the orchestrator decides "this topic needs market analysis, competitor mapping, and sentiment review" and dispatches specialist workers to each. A compliance review where the orchestrator inspects a document and decides which checks apply. A content pipeline where the orchestrator reads an article and decides which platforms, which formats, and which variants to produce. The pattern is the most common shape for production-grade agent systems because most real work does not fit into a fixed linear pipeline and does not split cleanly into fixed parallel tracks.

The boundary rule has two parts. First, the orchestrator's output must be structured. That means JSON, a typed schema, or anything else a downstream parser can reliably handle. Second, the workers must be independent of each other. The reason the first part holds is that an orchestrator producing a plan in prose is an orchestrator that can't be dispatched from programmatically. The synthesizer has to parse the plan, extract the tasks, and route each one. If the plan is prose, all of that happens inside another LLM, which reintroduces the same coupling Parallelization exists to avoid. The reason the second part holds is that workers depending on each other's outputs secretly turn your fan-out into a chain. The parallelism you paid for evaporates. The debugging surface explodes because a failure in worker B might be caused by a failure in worker A that the system didn't surface.

The anti-pattern is workers that need each other's outputs to do their jobs. The fix, when you discover this, is almost always that what you actually have is a nested Prompt Chain disguised as a fan-out. Re-architect it as a chain, or promote the dependency to a second orchestrator round. The orchestrator produces plan A, workers run, the synthesizer produces inputs for plan B, and a second orchestrator round dispatches workers again.

A fully-formed Orchestrator-Workers pattern almost always includes a Guardian before the synthesized output ships. The orchestrator plans, the workers execute, the synthesizer merges, and the Guardian checks the final product against policy before it leaves the system. Skipping the Guardian in this archetype is the single most common production mistake in multi-agent systems. It's also the one that costs the most when it goes wrong, because the orchestrator has usually touched multiple external systems by that point and the errors are already propagating.

---

5. Evaluator-Optimizer — The Writer-Critic Loop

Evaluator-Optimizer is a feedback loop. One agent produces the work, whether that's a draft, a design, or a recommendation. A second agent evaluates the output against explicit criteria. If the output passes, it ships. If it fails, the evaluator's feedback goes back to the first agent, which produces a revised version. The loop runs until the output passes or a maximum number of iterations is hit.

Use Evaluator-Optimizer when the output benefits from iterative refinement and the quality criteria are concrete enough to evaluate mechanically. Policy drafts with compliance criteria. Marketing copy with brand voice criteria. Code with test criteria. Translations with fidelity criteria. Anywhere a human would naturally say "draft, review, revise, ship," the pattern maps cleanly onto two agents (one producer, one critic) with a loop between them.

The boundary rule has two parts. First, the evaluator needs concrete criteria. "Does this meet requirements A, B, and C?" is a criterion. "Is this good?" is too vague to function as one. Second, the loop needs a hard iteration ceiling. The reason the first part holds is that soft criteria produce soft improvement. An evaluator asked "is this good?" will say yes on iteration two regardless of whether the draft is actually better, because "good" has no anchor. The evaluator needs a checklist it can fail against. If you can't write the checklist, what you have is a preference. Preferences don't refine.

The reason the second part holds is that without a ceiling the loop runs forever. The writer produces. The evaluator finds something new to object to. The writer revises, the evaluator objects again. Three iterations become seven, seven become fifteen. Tokens burn. Production grinds. Meanwhile the output stopped meaningfully improving around iteration three. The ceiling, typically three to five iterations, forces the system to escape the loop and call for help when the automated process can't converge. That escape is the feature working as designed.

The anti-pattern is treating the evaluator as a guardian. The evaluator and the Guardian have different jobs. The evaluator drives revision. Enforcement is the Guardian's work. An evaluator with a Guardian's veto power turns the loop into a denial machine, because a Guardian is measured on catch rate and a Guardian incentivized to catch will find something to catch on every pass. Keep the evaluator focused on "is this at the quality threshold?" and put the Guardian at the end of the loop, outside of it, checking the final accepted output against compliance rules before release.

Evaluator-Optimizer is also the archetype most prone to hidden cost blowouts. Each iteration is another full-system call. A content workflow that pushes every draft through five rounds of evaluation is burning five times the tokens of a single-pass system. The pattern only pays off when the quality delta across iterations is meaningful. Measure the delta before committing. If iteration three produces the same quality as iteration one, the loop is theater and the archetype is wrong.

---

How to Choose the Right Archetype

Four questions.

1) can the task be broken into clear, fixed sequential steps with nothing meaningfully parallel? If yes, Prompt Chaining. If the sequential steps exceed four, consider whether Orchestrator-Workers would handle the middle of the chain more cleanly.

2) are there multiple distinct kinds of input that need different handling? If yes, Routing. Pair it with whatever archetype each branch needs downstream.

3) does the task benefit from multiple independent perspectives on the same input, merged by a rule? If yes, Parallelization. If the merge requires reasoning rather than a rule, you don't want Parallelization — you want Orchestrator-Workers.

4) is the task complex enough that the decomposition depends on the input itself? If yes, Orchestrator-Workers. If the output also benefits from a revision loop, compose Orchestrator-Workers with Evaluator-Optimizer around the final synthesis step.

If none of these apply cleanly, the workflow probably doesn't need a multi-agent system yet. A single well-scoped agent with the right boundary rule is often more correct than a poorly-matched archetype. The archetypes exist to add leverage where it's needed — not to add agents where one would have sufficed.

---

The Boundary Rules at a Glance

The gate must be explicit, not buried in a system prompt. The router must enumerate every category and have a defined default. Aggregation logic must be deterministic, not another LLM call. Orchestrator output must be structured, and workers must be independent of each other. The evaluator uses concrete criteria and a hard iteration ceiling.

Every agent workflow that fails in production violates one of these. The rules are cheap. The violations are not.

---

Why Most Archetype Deployments Never Make It to Scale

Here's the hard number. Ninety percent of enterprise AI pilots never reach production. The interesting part is the root-cause breakdown. Lack of an operating model — 42%. Unclear business case — 38%. Governance gaps — 35%.

Each of those root causes maps directly onto an archetype selection error.

The operating-model failure shows up when teams pick Prompt Chaining for work that actually required Orchestrator-Workers. The pilot runs as an isolated script, demonstrates the model works, and then has nowhere to integrate because the broader workflow was never decomposed. A chain that survives the demo dies in the handoff to the next team.

The governance gap shows up when teams deploy Parallelization or Orchestrator-Workers without an Evaluator or a Guardian layer. The parallel fan-out is fast. The synthesized output looks clean. Nothing is checking it against policy. The first time it ships something it shouldn't, the post-mortem finds that the archetype was half-built.

The unclear-business-case failure shows up when teams pick the archetype that looks most impressive instead of the one that matches the task. Orchestrator-Workers gets chosen because it sounds sophisticated, when the underlying task was a four-step Prompt Chain that would have shipped in a week. The pilot then spends two quarters building the wrong pattern.

None of these are technology failures. All three are archetype-selection failures that would have been caught in five minutes if someone had checked whether the use case actually fits the archetype. Pick the archetype last, not first. Decompose the work, check which of the four blockers you're anchored in, and the archetype declares itself.

---

A Final Pattern — Zoom In, Zoom Out

One more pattern reframes all five archetypes at once. No archetype is a complete system. Every archetype you deploy is a single step in a longer chain of work. A trend-to-concept Orchestrator-Workers workflow is step one of a chain that also includes prototyping, sourcing, negotiation, and product development. Deploy the new archetype and the throughput contract with every adjacent workflow changes. If the new Orchestrator-Workers pattern now produces seventy concepts a week where the old process produced five, something has to change in the prototyping team downstream, or the bottleneck just moved.

The practical implication — design each archetype for the handoff it enables, not just for the task it runs. Ask what the next workflow needs from this one, and what this one needs from the workflow upstream. Archetypes that optimize in isolation ship to pilot. Archetypes that optimize for the handoff ship to production.

---

FAQ

What are the five agentic workflow archetypes?

Prompt Chaining, Routing, Parallelization, Orchestrator-Workers, and Evaluator-Optimizer. Each solves a specific shape of workflow problem. Prompt Chaining is sequential with gates. Routing is classification with branches. Parallelization is independent perspectives with deterministic merging. Orchestrator-Workers is dynamic decomposition with synthesis. Evaluator-Optimizer is a writer-critic feedback loop.

When should I use Prompt Chaining versus Orchestrator-Workers?

Prompt Chaining is for fixed sequential steps where every run follows the same path. Orchestrator-Workers is for complex tasks where the decomposition depends on interpreting the input. If the steps are known at design time, use Prompt Chaining. If the steps are decided at run time, use Orchestrator-Workers.

Can I combine archetypes?

Most production systems do. Routing + Parallelization, Orchestrator-Workers + Evaluator-Optimizer, Prompt Chaining with a routing step inside it. The rule when combining is to keep the boundaries visible — each archetype section of the workflow should be labeled and scoped, not blended into a single unreadable graph.

What's the difference between Parallelization and Orchestrator-Workers?

In Parallelization, the branches are fixed at design time and do the same kind of work on the same input from different angles. In Orchestrator-Workers, the branches are decided at run time by the orchestrator based on the input, and the workers do different work. Parallelization merges with a rule. Orchestrator-Workers merges with reasoning.

Why does aggregation in Parallelization have to be deterministic?

The benefit of Parallelization is independence. Each agent runs isolated from the others so their outputs don't bias each other. An LLM aggregator reads all the outputs together and synthesizes them, which couples them again — the first output colors the reading of the second. The independence dissolves. A deterministic aggregator preserves the independence by combining the outputs mechanically.

---

Five archetypes. Five ways to compose agents into systems that survive production. The archetypes are not interchangeable, and the boundary rules that keep each one honest are the difference between a workflow that scales and one that quietly degrades until the failure is public.

The archetypes are composition patterns. The next question is how the agents inside each archetype earn their way from supervised work to unattended operation. That's the autonomy ladder. The Progressive Autonomy Ladder covers how each agent inside any workflow moves from Assist to Own, earned through evidence rather than timeline. The Agent × Archetype Matrix is the capstone — a full walkthrough of Uncanny Works where types, components, archetypes, and autonomy all show up inside one running production system. If you haven't read them, the Five Agent Types and the Four Components of an AI Agent are the first two primitives of the same stack.

— Arthur Simonian Founder, Uncanny Labs · AI Workforce Agency