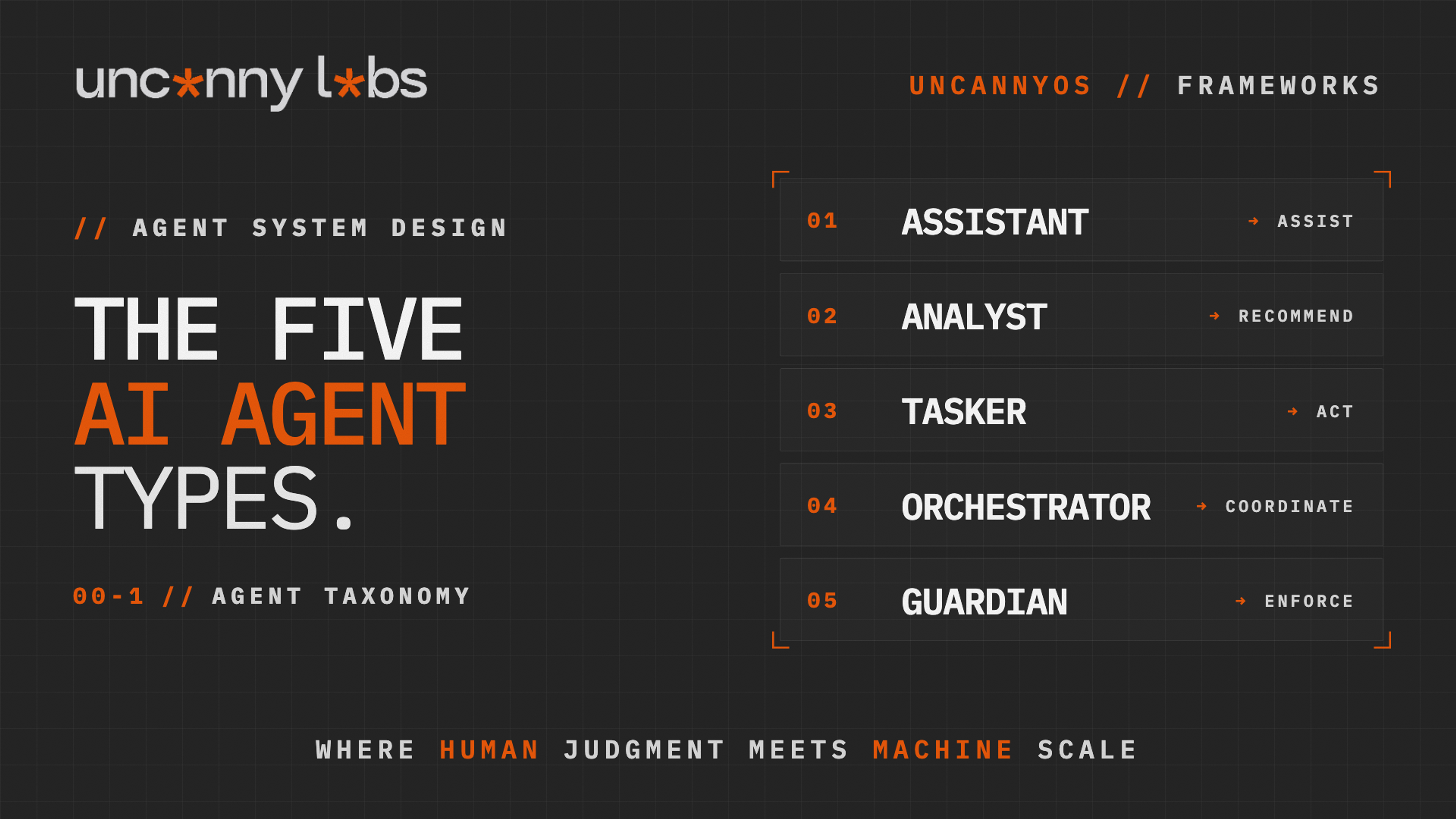

The word "agent" in AI has collapsed five different roles into one. Assistants. Analysts. Taskers. Orchestrators. Guardians. Each has a distinct job, each has a distinct failure mode, and all of them are called by the same name in most discussions. The collapse is the reason most agent projects never reach production. The first axis of the design stack is pulling them back apart.

When a team doesn't know which is which, the system ships chaos. An "agent" that was supposed to summarize research starts sending emails. A "copilot" that was supposed to recommend edits starts shipping them. A "supervisor" that was supposed to enforce quality gets pressured into approving bad output because it was also measured on completion. This pattern repeats on every agent project that never reaches production. The model and the tooling are fine. The taxonomy was never drawn.

Agent systems have a design stack. The first axis of that stack is type — what a given agent is for. There are five of them. Each has a boundary rule that stops it from drifting into the next one's lane. Break the rule and you don't have an agent system. You have a liability.

The taxonomy below is the first primitive of UncannyOS — the design methodology we use at Uncanny Labs to build, govern, and scale agent systems at production load. It's the starting point for every workflow we ship, inside Uncanny Works (our productized service-as-a-software AI Workforces) and across every client engagement. It draws from the broader agentic AI literature and from the systems we've built and shipped at Uncanny Labs, formalized into a design vocabulary that survives contact with reality. The five types are the Assistant, the Analyst, the Tasker, the Orchestrator, and the Guardian. What follows is the taxonomy in full.

---

1. The Assistant — The Interface Layer

The Assistant prepares. It retrieves information, summarizes long context, drafts first versions, and stages the material a human or another agent needs to move forward. Its entire purpose is to compress the friction between a question and the readable answer.

Autonomy sits at Assist. A human reviews every output before anything happens downstream. The Assistant surfaces, it doesn't decide, and it never actuates. If you ask an Assistant to read a 40-page vendor contract and produce the three clauses that matter, you've used it correctly. If you ask it to decide whether to sign, you've left the lane.

The canonical use cases are research briefs, meeting prep, policy Q&A, document summarization, code explanation, and email drafting. Anywhere a knowledge worker loses thirty minutes to preparation that isn't itself the work, an Assistant belongs.

The boundary rule: an Assistant that decides is an uncontrolled Analyst. The reason the rule holds is that decisions require trade-off reasoning, which in turn requires a mandate and a review layer. Assistants don't have either. They produce context. When you push an Assistant into deciding — "research X and email me if it's urgent" — you've silently promoted it to Analyst without giving it Analyst-level governance. The day it's wrong, no layer catches it. Nothing above the Assistant was designed for oversight because the Assistant wasn't supposed to need it.

The most common anti-pattern is the "do-everything" Assistant — a general chatbot handed access to calendars, inboxes, and task systems and instructed to "be helpful." Helpfulness is not a type. What you've built is an Assistant with the powers of a Tasker and the judgment of an Analyst, accountable for none of them. When the helpfulness produces a wrong decision, the post-mortem has nowhere to land.

Assistants are the most common agent in the wild and the most commonly mislabeled. The fix is almost always subtraction. Take away the action and the decision, leave the preparation, and the Assistant works.

---

2. The Analyst — The Reasoning Layer

The Analyst interprets. It scores, classifies, forecasts, ranks, and recommends. It produces a reading of the situation that a human — or a downstream agent — uses to make a call. Where the Assistant ends at "here is what is," the Analyst ends at "here is what I think it means."

Autonomy sits at Recommend. The Analyst hands off a judgment, the human chooses whether to act on it. Lead scoring, churn prediction, risk assessment, topic classification, sentiment analysis, deal prioritization, pricing scenario comparison — all Analyst work. The output is never action. The output is interpretation, with enough structure that a reviewer can agree, disagree, or override.

The boundary rule — an Analyst never pulls the trigger. Separating judgment from execution is what makes the recommendation reviewable. The moment an Analyst acts on its own recommendation, the review layer collapses and you've built a closed loop with no external check. The recommendation was never audited. The action is irreversible. The first time the Analyst is wrong at scale, the damage compounds silently until something obvious breaks.

The classic anti-pattern — "score this lead, and if the score is above eighty, auto-add to the outbound sequence." The auto-execution is where the system leaves the Analyst lane. You've just built an Analyst that takes action, which is exactly the failure mode the two-layer separation exists to prevent. If you want that behaviour, split it: one Analyst produces the score, one Tasker executes the enrollment under a deterministic rule, and one Guardian audits threshold drift. Three agents. Three accountabilities. Three places to look when something goes wrong.

Well-designed Analysts are boring on purpose. They score, they rank, they recommend — and they stop. The boring part is the feature. A boring Analyst is a reviewable Analyst, and a reviewable Analyst is the reason the rest of the system can move faster without losing the audit trail.

---

3. The Tasker — The Actuation Layer

The Tasker executes. It takes a bounded instruction and performs a specific, reversible action inside pre-defined guardrails. Create this record. Send this email. Update this field. Publish this draft to this channel. Post this status. The Tasker is the agent that touches the outside world.

Autonomy sits at Act, bounded. The action is reversible, the blast radius is small, and the guardrails are explicit. A Tasker sending an email has been given a template, a recipient rule, and a rate limit. A Tasker updating a CRM has been given a field, a source, and a validator. The action space is enumerated ahead of time. Anything outside that space routes up.

The boundary rule — a Tasker that interprets is an uncontrolled Analyst. The reason the rule holds is that interpretation is a different job with a different review layer. When you hand a Tasker a request like "send an email to the customer about their concern," you've asked it to decide what the concern is, decide which facts matter, and decide how to frame them — three Analyst operations, none of them audited. The Tasker executes the result of its own interpretation. If the interpretation was wrong, nothing catches it.

The fix is to pair a Tasker with an Analyst upstream. The Analyst reads the ticket and produces a structured interpretation — category, tone, required response. The Tasker takes the structured interpretation and executes the bounded action. The layers hold. The audit trail is two hops deep instead of zero.

Taskers are the agents that scale. They run high volume, they run fast, and because they don't interpret, they don't degrade when the inputs drift. A well-bounded Tasker sending a hundred thousand status-update emails a day is a safer system than a single "smart email agent" with latitude to interpret what each recipient might want to hear. Volume amplifies any drift. The Tasker contract — narrow action space, explicit guardrails, no interpretation — is what keeps volume from turning into liability.

---

4. The Orchestrator — The Process Kernel

The Orchestrator coordinates. It decomposes a high-level task into a sequence of sub-tasks, delegates each sub-task to the right specialist, manages state across the run, and handles branching, retries, and escalation. Where Assistants prepare and Analysts recommend and Taskers execute, the Orchestrator sequences all three into a coherent workflow that produces an outcome no single agent could produce alone.

Autonomy sits at Act or Own, with defined escalation paths. An Orchestrator given a goal — "publish this week's article to LinkedIn, X, and the newsletter" — plans the steps, dispatches Taskers to each channel, monitors for failures, and escalates to a human when something is ambiguous or out of scope. It's the nervous system of the agent team. Every multi-agent system has one, even if the system doesn't call it by name.

The boundary rule — an Orchestrator that governs compliance is a conflicted authority. The reason the rule holds is incentive structure. An Orchestrator is measured on throughput, completion, and latency. A Guardian is measured on catch rate, enforcement, and refusal. Those two metrics are in tension. When you collapse them into one agent, the throughput incentive wins, because throughput is what the system celebrates on every successful run. The Guardian function quietly degrades until the day the failure is unrecoverable.

The anti-pattern here is subtle because the shortcut feels efficient. It's cheaper in tokens to give the Orchestrator a "quality check" step than to run a separate Guardian. It's easier to author a single system prompt than to manage two. The saving looks real until the first incident proves that the Orchestrator approved its own worker's output against the criteria it was also responsible for enforcing. The investigation finds that the agent was never independent from what it was supposed to check.

Good Orchestrators are modest. They plan, dispatch, retry, and escalate. They don't judge their own output. They don't decide what's acceptable. They call the Guardian and defer.

A production illustration — a B2B sales intelligence deployment assigned an Orchestrator to interpret a sales rep's request, dispatched parallel Analysts for market intelligence, customer insight, pricing, and risk assessment, and placed a Guardian over the whole execution. Pre-deal research that previously took days collapsed to minutes. The number of scenarios the team could evaluate per account went up by a factor of ten to twenty. The point isn't the speed. The point is that the Orchestrator made the multi-agent topology legible to the business — each specialist owned one reasoning domain, the Guardian owned the quality gate, and the sales team owned the decision. Five explicit accountabilities where previously there had been one overloaded human doing all five invisibly.

---

5. The Guardian — The Compliance Layer

The Guardian enforces. It monitors other agents' outputs against explicit policies, audits the system's behavior against compliance rules, and holds veto power at the gates where something could ship that shouldn't. PII detection, brand voice validation, financial controls, legal clause scanning, factual accuracy checks, safety filtering — all Guardian work. It is the agent that exists specifically to say no.

Autonomy sits at Assist or Recommend. A Guardian watches and flags. It can block, it can escalate, it can require human review. It can never produce the thing it's inspecting. If it produces, it has a conflict of interest with its own inspection, which means it isn't a Guardian anymore. Guardians are orthogonal to the workflow — they sit across it, not inside it.

The boundary rule — a Guardian that optimizes for completion is a compromised watchdog. The reason the rule holds is the same incentive physics as the Orchestrator case. When a Guardian is measured on "how many items got through," it will eventually rubber-stamp to hit the number. When it is measured on "what got caught and what didn't," it stays honest. How you measure the Guardian is how you decide whether it can still do its job in six months.

The anti-pattern is treating the Guardian as a bottleneck to be minimized rather than a layer to be invested in. Teams under launch pressure tune the Guardian toward lenience, reduce the number of checks, raise the approval thresholds, and celebrate the resulting speed-up. The system ships faster for a quarter. Then the first regulated incident occurs in production and the investigation discovers that the Guardian was operating at a fraction of its designed sensitivity because nobody wanted to slow the release train. The Guardian didn't fail. It was demoted until it couldn't.

Guardians are the agents that make scale survivable. Every agent team that runs at volume in a domain with real consequences — content, compliance, finance, healthcare, legal — has a functioning Guardian. Every team that doesn't is running on luck.

One subtlety worth naming — a Guardian is not the ethics layer. Organizational ethics don't live inside a single agent or a single model; they are distributed across workflows, oversight structures, escalation paths, and refusal conditions. A Guardian makes the places where ethical decisions happen legible. It does not replace the governance architecture around it. Teams that install a Guardian and declare the problem solved have made a category error — they've mistaken a signal layer for a policy layer. The Guardian's value is that it surfaces the decisions. What the organization does with what the Guardian surfaces is a policy and governance question that belongs upstream of any agent.

A second subtlety — well-designed Guardians accumulate institutional memory. Each completed audit enhances the Guardian's knowledge base. Subsequent audits get sharper, not just faster. What began as an efficiency gain evolves into capability expansion. The Guardian isn't just a quality gate. Over enough cycles, it becomes the system's learning organ — the component that remembers what went wrong last time and knows where to look next time. Teams that treat Guardians as static cost centers miss the compounding.

---

How to Identify Which Type You Need

Three questions, in order.

1) does the agent produce an interpretation or an action? If interpretation, it's an Assistant or an Analyst. If action, it's a Tasker, an Orchestrator, or a Guardian.

2) if it produces interpretation, does it decide anything? If yes, it's an Analyst. If it only prepares material for someone else to decide, it's an Assistant.

3) if it produces action, what kind? If it executes a bounded operation, it's a Tasker. If it coordinates other agents through a multi-step plan, it's an Orchestrator. If it checks other agents' work and holds veto power, it's a Guardian.

A useful second pass: run the agent's current design against all five boundary rules. Does the design violate any of them? If yes, you've collapsed two types into one. Separate them. You'll ship slower for a week and run cleaner forever.

---

The Boundary Rules at a Glance

Print these. Stick them above the screen.

An Assistant that decides is an uncontrolled Analyst. An Analyst never pulls the trigger. A Tasker that interprets is an uncontrolled Analyst. An Orchestrator that governs compliance is a conflicted authority. A Guardian that optimizes for completion is a compromised watchdog.

Every agent project that fails in production violates one of these. The rules are cheap. The violations are not.

---

FAQ

What are the five AI agent types?

Assistant, Analyst, Tasker, Orchestrator, and Guardian. Each has a single role and a boundary rule that prevents role confusion. Assistants prepare. Analysts recommend. Taskers execute. Orchestrators coordinate. Guardians enforce.

Why does agent type matter if the model is the same?

The model is interchangeable. The role the model plays in the system is not. Two instances of the same model can be deployed as an Analyst and a Guardian inside the same workflow and the system works — because the roles are structurally different, not because the models are. Role confusion is an architecture problem, not a model problem.

What happens when you collapse two agent types into one?

You lose the review layer that existed between them. An Assistant that decides is an Analyst with no oversight. An Analyst that acts is an Analyst + Tasker with no pause for review. An Orchestrator that governs compliance has no one to check it. Every collapse removes an accountability surface. The collapse usually ships faster for a quarter and then fails irrecoverably.

How do you audit an agent system for type violations?

Run the five boundary rules against every agent in the design. For each violation, the remediation is almost always the same — split the agent into two agents, with a handoff between them that makes the previously-hidden decision explicit. The split costs a week of implementation and recovers the audit trail permanently.

Is this framework specific to Uncanny Labs?

The taxonomy draws from the broader agentic AI literature and from the systems we've built and shipped at Uncanny Labs. What makes it UncannyOS is the formalization — the boundary rules, the anti-patterns, and the identification logic that turn a set of role labels into a design vocabulary that holds up under load. We use it across every workflow we ship, internally and for clients.

---

Five types. One job each. Five boundary rules that keep the jobs from collapsing into one another. Start there, and the agent system you're designing will hold its shape under load.

Types tell you what each agent is for. The next question is what each agent is made of. The Four Components of an AI Agent goes inside each type — Brain, Memory, Tools, Governance. The Five Workflow Archetypes covers how these five types compose into real workflows. The Progressive Autonomy Ladder covers how each agent inside any workflow earns its way from Assist to Own. The Agent × Archetype Matrix is the capstone — a full walkthrough of Uncanny Works where every primitive above shows up inside one working system.

— Arthur Simonian Founder, Uncanny Labs · AI Workforce Agency