In November 2025, McKinsey published their annual State of AI report. The headline number was triumphant. 88% of companies now use AI regularly in at least one function. A year earlier that number was 78%. A year before that, 55%. Enterprise AI adoption had, in McKinsey's phrasing, reached near-universal saturation.

Buried three pages deeper was a second number. Only 39% of respondents could point to any measurable impact on earnings. In most of those cases, AI contributed less than 5% of total EBIT.

Four months before McKinsey, MIT Project NANDA had published a harder version of the same finding. Their report, titled The GenAI Divide, studied hundreds of enterprise GenAI deployments. Despite $30 to $40 billion in enterprise GenAI spend over the prior year, 95% of those organizations could point to no business return. A separate tally inside the same report found that 95% of integrated AI pilots never made it to production. Most of the money had gone in. Almost none of the value had come out.

The two reports were measuring the same phenomenon from different angles.

Call it the AI Value Gap.

The gap is not a technology problem. The models work, the APIs are mature, and the infrastructure gets cheaper every quarter. The gap exists between the corporate decision to adopt AI and any observable change in how the business actually performs. In 2026, that gap has never been wider.

Walk into any of the companies on the adopter side of the 88% and the work looks modern. The dashboards are polished. The Slack channels tag Claude and ChatGPT. The website has an AI support agent. The intranet has a GenAI wiki everyone used once and forgot about. Quarterly slides say "AI." Procurement says "AI." The bottom line says nothing has changed.

Something about the whole operation feels off. Senior leaders feel it. ICs feel it. Nobody says it out loud.

That feeling has a name.

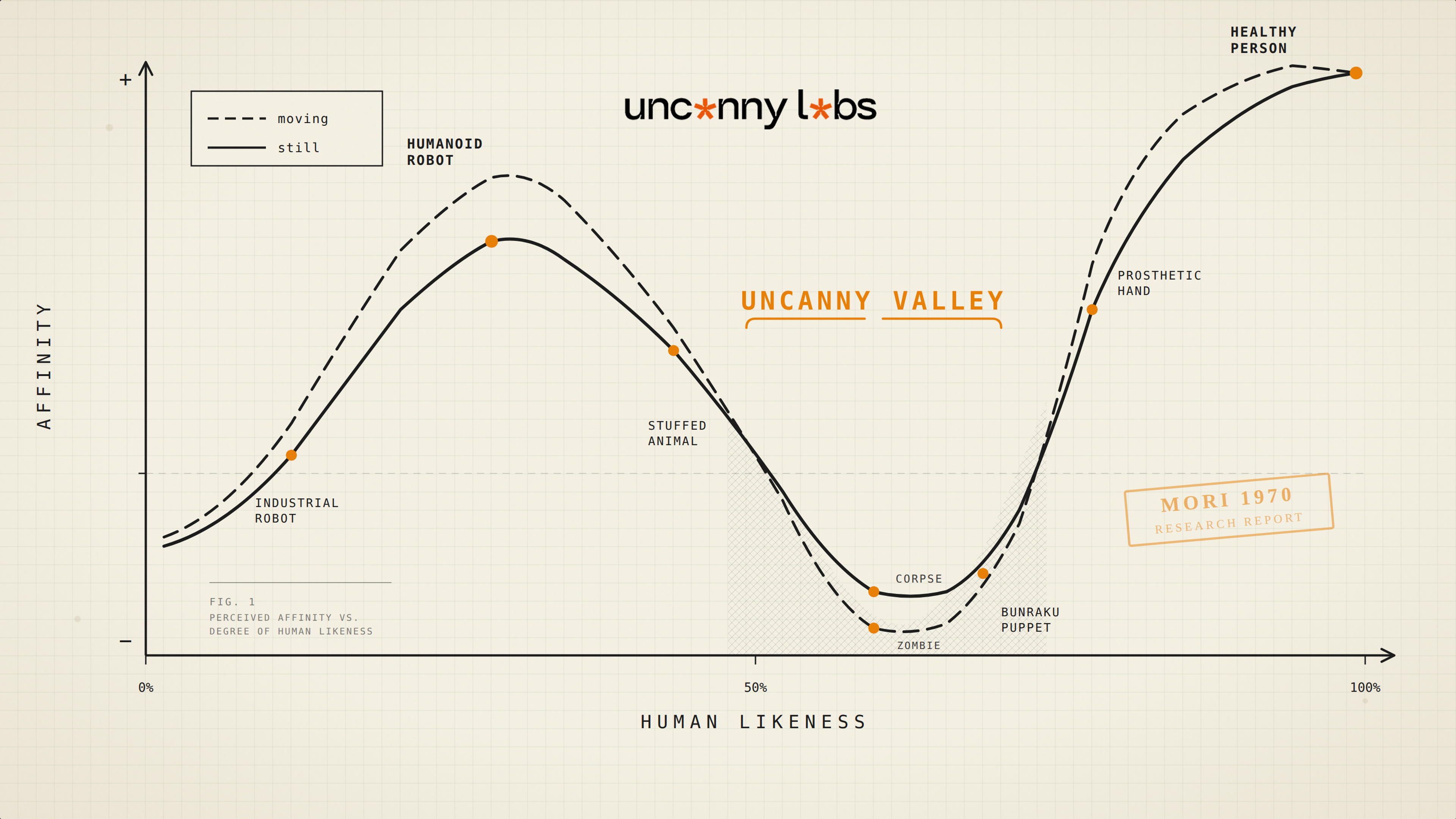

In 1970, Masahiro Mori coined "uncanny valley" to describe the unease people feel around something almost-but-not-quite human — a synthetic face that looks close to real, but not close enough, and the closer it gets without landing the worse it feels. The same valley has opened up inside knowledge work. Consultants promised AI transformation. What got delivered was AI "integrated" onto the same old workflows. Part human, part machine. Fragmented across tools, blurred in responsibility, heavy on cognitive load, thin on trust.

Welcome to the uncanny valley of AI-powered work.

The valley is where the AI Value Gap lives. The reason 39% of companies see EBIT impact and 61% don't is not that the 61% chose the wrong model or the wrong vendor. They chose the same models and vendors as everyone else. The difference is what happened on arrival.

A workflow built for humans is not really a workflow. It's a hundred small acts of tacit coordination that a team has agreed to without writing down. The senior account manager who "just knows" which deals to prioritize. The PM who knows which designer is fast today and which one is stuck. The Slack thread from three months ago that contains the decision governing this week's work. The unwritten rule that finance takes 48 hours to approve even though the SLA says 24. Workflows like that cannot be handed to an agent. They don't execute end-to-end for an agent because they don't execute end-to-end for a human either — they execute through human judgment at a dozen invisible handoffs.

When AI gets added to that workflow, it lands between those handoffs and stalls at the first one that isn't documented. The AI executes the parts it can execute. The rest go back to humans, who now have an additional job — translating their own tacit coordination into something the AI can work with. The workflow gets worse, not better. Productivity drops instead of climbing. The dashboard says AI is handling X% of tickets. The operation feels slower than it did before.

The 95% of pilots that never reach production fail for the same reason, one step earlier. A pilot that tests AI inside an un-redesigned workflow is measuring the wrong thing. It measures whether the model works. The model works. What it does not measure is whether the surrounding workflow can reliably consume the model's output — whether the handoffs are explicit, whether the decision criteria are machine-readable, whether a human is cleanly positioned as the director and not the executor. In the sandbox the pilot passes. In production it hits the gap. It fails and gets quietly binned at the end of the quarter.

MIT's own diagnosis, embedded in the report's language, is that the organizations crossing the GenAI Divide are the ones "aggressively solving for learning, memory, and workflow adaptation." Decoded: the ones that redesigned the work before deploying the AI. Not the ones with the best model or the biggest budget — the ones that took the workflow apart and rebuilt it to run on machines.

This pattern repeats in every domain where AI has been deployed at scale. Radiologists working alongside AI imaging systems don't read fewer scans — they read different ones, the flagged ones, where judgment matters most. The workflow for radiology was rebuilt around the new division of labor. Corporate legal teams deploying AI contract review stopped reading every clause top to bottom and started reviewing flagged anomalies. The review workflow was rebuilt. Klarna, the loud case of 2024, spent a year replacing customer service agents with an OpenAI-powered assistant and claiming 700 full-time employees worth of capacity, then quietly hired the humans back when the customers started complaining. The second iteration worked because the workflow was rebuilt — AI handles routine volume, humans handle nuance, routing logic carries the handoff between them.

McKinsey's 2025 data puts a number on the difference. Companies that redesign workflows before deploying AI move 2.8x faster than those retrofitting AI onto existing processes. Not 28% faster. Nearly three times.

Every successful AI deployment at scale looks the same underneath. The work was redesigned first. The AI was deployed second.

Every failed deployment looks the same, too. The AI was deployed first. The work was left as it was.

The 88 / 39 / 95 gap is not three problems. It is one. The companies sitting inside it are not there because their AI failed. They are there because their workflows were never rebuilt to run it. Every token the AI burns in a workflow that wasn't redesigned for it is a wasted token. Multiply that by billions of tokens across thousands of deployments and you get $30-40 billion in enterprise spend with almost nothing to show for it.

Death by a thousand wasted tokens.

The fix is not better models. It is not bigger AI budgets. It is the step most companies skipped. Audit the workflow as it actually runs, not as the org chart says it runs. Redesign it for machine execution in the places machines belong, and for human judgment in the places only humans can carry. Build the handoff architecture between them. Deploy AI inside that design, not on top of the old one.

This is the work Uncanny Labs does before any agent gets deployed.

The AI Value Gap is what the uncanny valley of AI-powered work looks like on an earnings report. Better models won't close it. More adoption won't either. The valley gets crossed by rebuilding the work underneath — not by upgrading the AI on top of it.

Start with the work. The AI comes after.

— Arthur Simonian Founder, Uncanny Labs | AI Workforce Agency

If your team is wiring AI into a workflow that was never redesigned for it, we run 30-minute calls to map where the redesign would start. Book one →